|

Note that if you plan to use time zones all the dates provided should be pendulum dates. A taskid can only be added once to a DAG. This is raised if exactly one of the fields is None. DAGs essentially act as namespaces for tasks.

Prior to AIP-39), or both be datetime (for runs scheduled after AIP-39 is executiondate (datetime.datetime) the execution date. runid defines the the run id for this dag run. dagid (int, list) the dagid to find dag runs for.

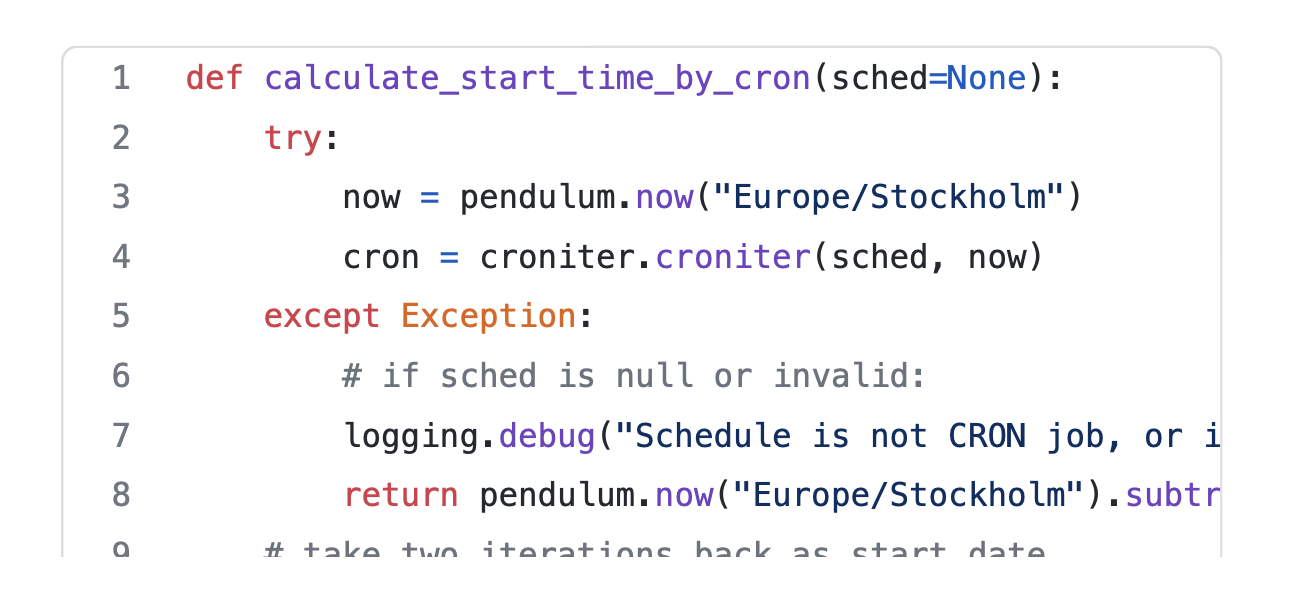

In order to schedule a DAG you need to define the following mandatory parameters: scheduleinterval. DAGs are also evaluated on Airflow workers, it is therefore important to make sure this setting is equal on all Airflow nodes. Returns a set of dag runs for the given search criteria. Mandatory and Optional parameters to schedule a DAG. You can also set it to system or an IANA time zone (e.g. If you’re based in US Pacific Time, a DAG run of 19:00 will correspond to 12:00 local time. Default time zone If you just installed Airflow it will be set to utc, which is recommended. You should not expect your DAG executions to correspond to your local timezone. A simple way to do this is edit your start date and schedule interval, rename your dag (e.g. If this time has expired it will run the dag. This behavior is shared by many databases and APIs, but it’s worth clarifying. To schedule a dag, Airflow just looks for the last execution date and sum the schedule interval. The data interval fields should either both be None (for runs scheduled Airflow stores datetime information in UTC internally and in the database.

InconsistentDataInterval ( instance, start_field_name, end_field_name ) ¶īases: Įxception raised when a model populates data interval fields incorrectly.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed